This AI Paper from Johns Hopkins and Microsoft Revolutionizes Machine Translation with ALMA-R: A Smaller Sized LLM Model Outperforming GPT-4

Machine translation, a crucial aspect of Natural Language Processing, has significantly increased. Yet, a primary challenge persists: producing translations beyond mere adequacy to reach near perfection. Traditional methods, while effective, often need to be improved by their reliance on large datasets and supervised fine-tuning (SFT), leading to limitations in the quality of the output.

Recent developments in the field have brought attention to moderate-sized large language models (LLMs), such as the ALMA models, which have shown promise in machine translation. However, the efficacy of these models is often constrained by the quality of reference data used in training. Researchers have recognized this issue and explored novel training methodologies to enhance translation performance.

Introducing Contrastive Preference Optimization (CPO), a game-changing approach to refining machine translation training. Achieve unparalleled translation accuracy with this groundbreaking technique. This method diverges from traditional supervised fine-tuning by focusing on more than just aligning model outputs with gold-standard references. Instead, CPO trains models to distinguish between just ‘adequate’ and ‘near-perfect’ translations, pushing the translation quality boundaries.

The mechanics of CPO are intriguing. It employs a contrastive learning strategy that utilizes hard negative examples, a significant shift from the usual practice of minimizing cross-entropy loss. This approach allows the model to develop a preference for generating superior translations while learning to reject high-quality but not flawless ones.

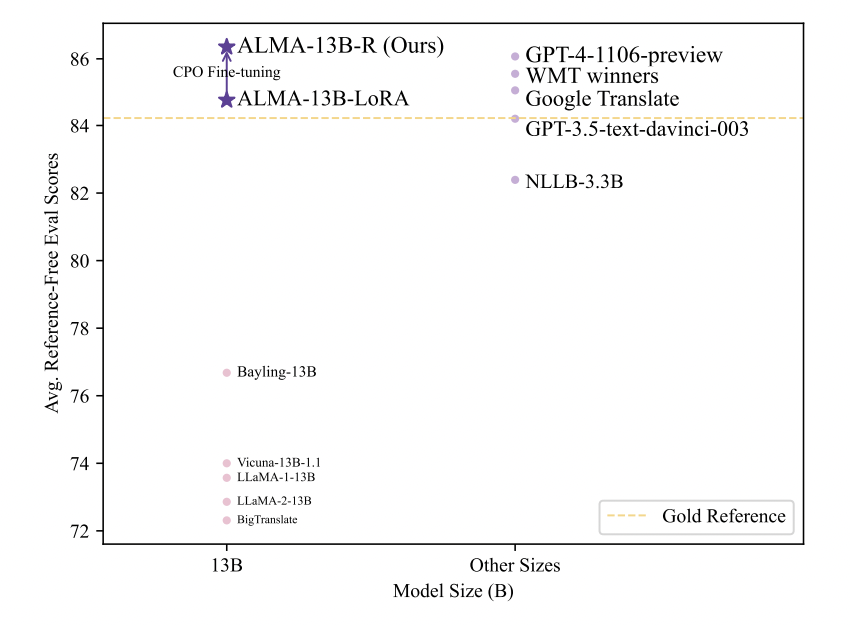

The results of implementing CPO have been nothing short of remarkable. The method has demonstrated a substantial leap in translation quality when applied to ALMA models. The enhanced model, referred to as ALMA-R, has showcased performance that matches or surpasses that of the leading models in the field, such as GPT-4. This improvement was achieved with minimal resource investment – a notable achievement in machine translation.

A detailed examination of the ALMA-R model’s performance reveals its superiority over existing methods. It excels in various test datasets, including those from the WMT competitions, setting new translation accuracy and quality standards. These results highlight the potential of CPO as a transformative tool in machine translation, offering a new direction away from traditional training methodologies that rely heavily on extensive datasets.

In conclusion, the introduction of Contrastive Preference Optimization marks a significant advancement in the field of neural machine translation. By focusing on the quality of translations rather than the quantity of training data, this novel methodology paves the way for more efficient and accurate language models. It challenges existing assumptions about machine translation, setting a new benchmark in the field and opening up possibilities for future research and development.

Check out the Paper and Github. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our 36k+ ML SubReddit, 41k+ Facebook Community, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our Telegram Channel

![]()

Muhammad Athar Ganaie, a consulting intern at MarktechPost, is a proponet of Efficient Deep Learning, with a focus on Sparse Training. Pursuing an M.Sc. in Electrical Engineering, specializing in Software Engineering, he blends advanced technical knowledge with practical applications. His current endeavor is his thesis on “Improving Efficiency in Deep Reinforcement Learning,” showcasing his commitment to enhancing AI’s capabilities. Athar’s work stands at the intersection “Sparse Training in DNN’s” and “Deep Reinforcemnt Learning”.